Don't want to miss a thing?

SoftServe Genie Express: Production Conversational Analytics on Your Lakehouse, in Four Weeks

In brief

- Most enterprises stall when trying to open Lakehouse data to business users through a governed, natural-language interface.

- SoftServe Genie Express is a four-week implementation that takes teams from a candidate use case to production-ready Genie spaces using native Databricks capabilities.

- The result is a governed conversational analytics solution with no custom stack to maintain and no second security model to manage.

Why Conversational Analytics Still Feels Far Off for Most Teams

Most enterprises we talk to already have their data in Databricks. The Lakehouse is in place, the medallion layers are loaded, dashboards are running, and a few teams have wired up Genie for ad-hoc exploration. What stalls is the next step: opening that data to business users through a natural-language interface that returns trustworthy answers and stays governed.

Historically, that next step has been a project, not a feature. Not because the technology is hard to find, but because there has been so much of it. Teams stitch together a vector store, a retriever, a custom NL2SQL service, an LLM gateway, a semantic layer somewhere in the middle, role-based access at every hop, and an evaluation harness on top to keep the whole thing honest. Each piece is reasonable. Together, they are a long engagement, not a feature build.

We have watched a lot of those engagements. The pattern repeats. Early on, the demo answers a handful of questions, and the energy is high. Further along, governance starts asking why the bot is returning salary data it shouldn't see. By the time the project finally ships, the team is maintaining a custom stack that resembles half a product, and the original use case has moved on.

The friction points are familiar:

What Changed in Databricks

The platform now ships most of the parts that were in the project. Genie spaces give you a conversational interface over governed data, with retrieval and SQL generation handled inside the platform rather than outside it. Unity Catalog metric views let you define business semantics once and reuse them across dashboards, Genie, and downstream agents, so that revenue means the same thing wherever it appears. Knowledge Stores give Genie the use-case context that a generic RAG layer cannot: glossaries, sample question-answer pairs, and links to source documents. Databricks One puts the chat experience front and center for business users without a separate front-end project. The Conversational API hands the same conversation to your applications and agents when you need it elsewhere.

The pieces are designed to fit together. Permissions, lineage, and audit are the same Unity Catalog you already use. There is no second security model to present to a CISO.

That is the question SoftServe Genie Express is built around.

SoftServe Genie Express

SoftServe Genie Express is a four-week, opinionated implementation pattern that takes a customer from a candidate use case to a working set of Genie spaces in production-shaped form. We use the Databricks-native capabilities described above, and we resist the urge to add layers that are not earning their keep.

In those four weeks, we sit with you to analyze your business use cases and the data already available in your Lakehouse. We then stand up Genie spaces backed by tailored Unity Catalog Business Semantics models and per-use-case Knowledge Stores. By the end, you have conversational chatbots that users can access through Databricks One and that you can embed in your own applications or plug into a wider agentic ecosystem through the Conversational API.

The Architecture, Layer by Layer

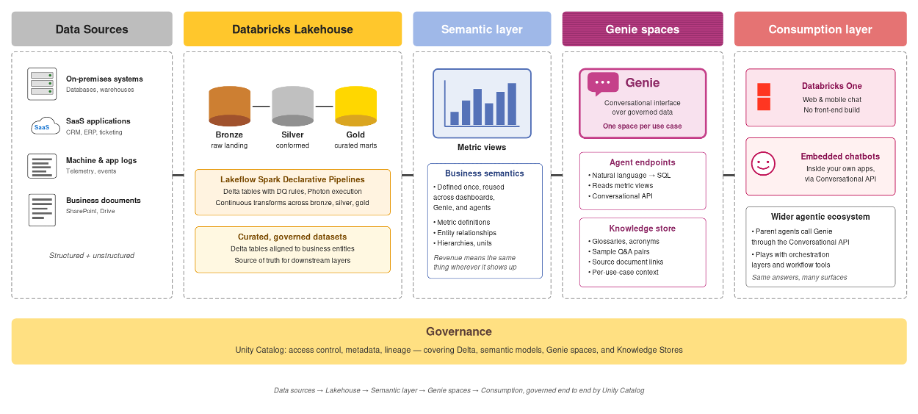

Data sources sit on the left of the picture: on-premises systems, SaaS applications, machine and application logs, business documents from SharePoint and similar repositories. They land in the Lakehouse and pass through bronze, silver, and gold the way they always have. Nothing exotic, and nothing we change just because a chatbot is being added on top.

Above the gold layer is the semantic layer. Metric views in Unity Catalog define the business meaning of each metric and dimension. This is where we encode "What does revenue mean?" and "Which date counts as the close date?" Genie reads from this layer, which is why answers stay consistent whether the same question comes from a dashboard, a notebook, or a chat window.

Genie spaces sit on top of the semantic layer. Each space is scoped to a use case and is paired with its own Knowledge Store, so the agent has context appropriate to that use case rather than a generic prompt. The Knowledge Store is where domain glossaries, common abbreviations, sample question-answer pairs, and links to source documents live. It is also where most of the per-customer tuning happens during the engagement.

The consumption layer is where users meet the system. Databricks One provides a chat interface in the Databricks UI, with no front-end build required. The Conversational API lets you embed the same conversation into a product you already ship, or hand it off to a parent agent in a wider agentic workflow.

Unity Catalog runs underneath all of this. Access control, metadata, and lineage are the same for Genie as for any other Lakehouse workload. We do not bolt on a second governance model, which is a small sentence with significant operational consequences.

How the Four Weeks Are Spent

Week one is discovery, not theatre. We sit with the people who own the use case and the people who own the data, and we figure out which questions are being asked, which decisions hang on the answers, and which datasets are in shape to support them. Some candidates use cases drop out here. That is the point. We would rather lose a use case in week one than carry it for three more weeks and discover that the data is not ready.

Week two is semantic modeling. We design the metric views and entity definitions that let Genie answer questions consistently. We also configure the Knowledge Store with the context Genie needs to behave like a domain expert rather than a generalist: glossaries, frequently asked questions with the answers your team already trusts, links back to the source documents, and known caveats. This is the unglamorous work that decides whether the chatbot is right or merely fluent.

Week three is integration and access. We wire up the consumption surfaces you will actually use. That might be Databricks One for internal users, an embedded widget in an existing application, the Conversational API for an agent you are building elsewhere, or some combination of the three. Permissions flow through Unity Catalog the same way they do for your other Lakehouse workloads, so the security review is the one your team already runs.

Week four is hardening. We run evaluations against a representative question set, tune the semantic model and Knowledge Store where the answers are off, and document the configuration so your team owns it after we leave. You finish the engagement with three things: working Genie spaces in production-shaped form, the patterns to extend them yourselves, and a clear view of what the next set of use cases looks like.

What Is Included

- Use case and data assessment, focused on the business questions that justify the build and the datasets that can credibly support them.

- Unity Catalog Business Semantics modeling, including metric views and entity definitions tailored to your data and your reporting conventions.

- Per-use-case Knowledge Store configuration, with glossaries, sample dialogues, and document links that give Genie real context.

- Genie space build and tuning, evaluated against a representative question set drawn from the people who will use it.

- Integration with the consumption surface that fits you: Databricks One, an embedded chatbot inside a target application, or the Conversational API for use inside a broader agentic ecosystem.

- A short rollout plan that covers governance review, change management, and the candidate use cases worth tackling next.

Deliverables

- Working Genie spaces for the agreed use cases, deployed against your Lakehouse and ready for user access.

- Tailored Unity Catalog Business Semantics models that your team can extend after we leave.

- Per-use-case Knowledge Stores with the domain context Genie needs for accurate answers.

- Conversational chatbots reachable through Databricks One, embeddable in your own applications, and integrable with wider agent ecosystems through the Conversational API.

- A governance-ready deployment that uses Unity Catalog the same way the rest of your platform does.

- A target architecture document that lays out where this goes next, including obvious extensions and the parts that need more thought.

Why Does This Work Better Than Building from Scratch

Speed is the obvious answer. A four-week timeline, compared to a long custom build, changes which use cases are worth tackling and which conversations you can have with the business. A long wait kills momentum. A four-week delivery preserves it.

The less obvious answer is governance. When the chatbot inherits its permissions, lineage, and audit trail from Unity Catalog, the security review on the way to production is one your team already runs for any other Lakehouse workload. There is no second system to explain, and no third-party data path to defend.

The third answer matters more over time. You are not maintaining a custom stack. As Databricks improves Genie, semantic modeling, and Knowledge Stores, you get the improvements. If you have built the equivalent capability with bespoke services, you are maintaining a fork of the industry forever, and the cost of that fork compounds.

Where This Fits, and Where It Does Not

Genie Express works best when you already have data in the Lakehouse, have a defensible use case with measurable business value, and are willing to start with a focused set of questions rather than a boil-the-ocean assistant. It works well alongside other Databricks-native investments, including BI dashboards your users already trust.

It is not the right starting point if your data still resides mostly in source systems and the Lakehouse work has not yet happened. It is also not the right fit when the questions you want answered require agentic action rather than analytics. In such cases, we point customers to a different conversation, often the SoftServe Agentic Catalyst program, which is built around outcome-driven AI agents tailored to high-automation business processes.

Conclusion

The build-it-yourself era of conversational analytics is mostly over. The platform got there. The work that remains is the work that always matters: choosing the right use cases, modeling the semantics carefully, giving the agent real context, and integrating it into the surfaces your users already live in.

SoftServe Genie Express is a four-week way to do that work without the long detour of building it from scratch. If you have a candidate, use case in mind, data sitting in the Lakehouse, and questions your business would rather not keep answering by hand, the math is straightforward.

Talk to us about your Genie Express use case